Background ^

In a previous article I explored the performance of different Linux RAID configurations in a situation where there are two very mismatched devices.

The two devices are a Samsung SM883 SATA SSD and a Samsung PM983 NVMe. Both of these devices are very fast, but the NVMe can be 6 times faster than the SSD for random (4KiB) reads.

The previous article established that due to performance optimisations in Linux RAID-1 targeted at non-rotational devices like SSDs, RAID-1 outperforms RAID-10 by about 3x for random reads in this unbalanced setup.

RAID-10 Layouts ^

A respondent on the linux-raid list suggested I test out different RAID-10 layouts. The default RAID-10 layout on Linux corresponds to the standard and is called near. There are also two alternative layouts, far and offset. Wikipedia has a good article on the difference between these three layouts.

Charts ^

Click on the thumbnails to see full size images.

Sequential IO ^

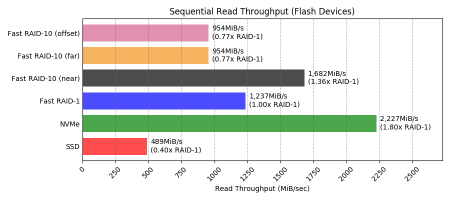

Reads ^

far and offset layouts perform the same: about twice the speed of a single SSD, but only ~57% of RAID-1 and interestingly only ~77% of RAID-10 near layout.

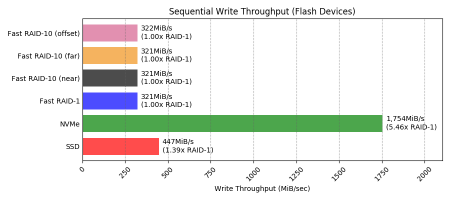

Writes ^

All layouts perform the same for sequential writes (the same as RAID-1).

Random IO ^

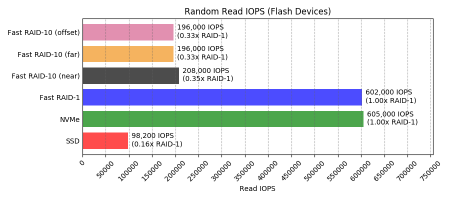

Reads ^

far and offset performed slightly worse than near (~94%) and still only about a third of RAID-1.

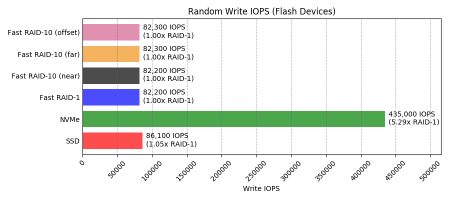

Writes ^

All layouts of RAID-10 perform the same as RAID-1 for random writes.

Data Tables ^

This is just the raw data for the charts above. Skip to the conclusions if you’re not interested in seeing the numbers for the things you already saw as pictures.

Sequential IO ^

| Test | Throughput (MiB/s) | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| SSD | NVMe | HDD | Fast RAID-1 | Fast RAID-10 (near) | Fast RAID-10 (far) | Fast RAID-10 (offset) | Slow RAID-1 | Slow RAID-10 (near) | |

| Read | 489 | 2,227 | 26 | 1,237 | 1,682 | 954 | 954 | 198 | 188 |

| Write | 447 | 1,754 | 20 | 321 | 321 | 321 | 322 | 18 | 19 |

Random IO ^

| Test | IOPS | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| SSD | NVMe | HDD | Fast RAID-1 | Fast RAID-10 (near) | Fast RAID-10 (far) | Fast RAID-10 (offset) | Slow RAID-1 | Slow RAID-10 (near) | |

| Random Read | 98,200 | 605,000 | 256 | 602,000 | 208,000 | 196,000 | 196,000 | 501 | 501 |

| Random Write | 86,100 | 435,000 | 74 | 82,200 | 82,200 | 82,300 | 82,300 | 25 | 21 |

Conclusions ^

I was not able to see any difference between the non-default Linux RAID-10 layouts for my devices and I think it’s likely this holds for all non-rotational devices in general.

far and offset layouts performed significantly worse than the default near layout for sequential read IO and no better than the default near layout in any other scenario.

Since layouts other than the default near restrict the reshaping options for RAID-10, I don’t recommend using them for RAID-10 composed entirely of non-rotational devices.

Additionally, if — as in my case — the devices have a big variance in performance compared to each other then it remains best to use RAID-1.

Appendix ^

Setup ^

I’ll only cover what has changed from the previous article.

Partitioning ^

I added two extra 10GiB partitions on each device; one for testing the far layout and the other for testing the offset layout.

$ sudo gdisk /dev/sdc

GPT fdisk (gdisk) version 1.0.3

Partition table scan:

MBR: protective

BSD: not present

APM: not present

GPT: present

Found valid GPT with protective MBR; using GPT.

Command (? for help): p

Disk /dev/sdc: 7501476528 sectors, 3.5 TiB Model: SAMSUNG MZ7KH3T8

Sector size (logical/physical): 512/4096 bytes

Disk identifier (GUID): 7D7DFDA2-502C-47FE-A437-5442CCCE7E6B

Partition table holds up to 128 entries Main partition table begins at sector 2 and ends at sector 33

First usable sector is 34, last usable sector is 7501476494

Partitions will be aligned on 2048-sector boundaries

Total free space is 7438561901 sectors (3.5 TiB)

Number Start (sector) End (sector) Size Code Name

1 2048 20973567 10.0 GiB 8300 Linux filesystem

2 20973568 41945087 10.0 GiB 8300 Linux filesystem

3 41945088 62916607 10.0 GiB 8300 Linux filesystem

Command (? for help): n

Partition number (4-128, default 4):

First sector (34-7501476494, default = 62916608) or {+-}size{KMGTP}: Last sector (62916608-7501476494, default = 7501476494) or {+-}size{KMGTP}: +10g

Current type is 'Linux filesystem'

Hex code or GUID (L to show codes, Enter = 8300):

Changed type of partition to 'Linux filesystem'

Command (? for help): n

Partition number (5-128, default 5):

First sector (34-7501476494, default = 83888128) or {+-}size{KMGTP}:

Last sector (83888128-7501476494, default = 7501476494) or {+-}size{KMGTP}: +10g

Current type is 'Linux filesystem'

Hex code or GUID (L to show codes, Enter = 8300): Changed type of partition to 'Linux filesystem'

Command (? for help): w

Final checks complete. About to write GPT data. THIS WILL OVERWRITE EXISTING

PARTITIONS!!

Do you want to proceed? (Y/N): y

OK; writing new GUID partition table (GPT) to /dev/sdc.

Warning: The kernel is still using the old partition table. The new table will be used at the next reboot or after you

run partprobe(8) or kpartx(8)

The operation has completed successfully.

$ sudo gdisk /dev/nvme0n1

GPT fdisk (gdisk) version 1.0.3

Partition table scan:

MBR: protective

BSD: not present

APM: not present

GPT: present

Found valid GPT with protective MBR; using GPT.

Command (? for help): p

Disk /dev/nvme0n1: 7501476528 sectors, 3.5 TiB

Model: SAMSUNG MZQLB3T8HALS-00007

Sector size (logical/physical): 512/512 bytes

Disk identifier (GUID): C6F311B7-BE47-47C1-A1CB-F0A6D8C13136

Partition table holds up to 128 entries

Main partition table begins at sector 2 and ends at sector 33

First usable sector is 34, last usable sector is 7501476494

Partitions will be aligned on 2048-sector boundaries

Total free space is 7438561901 sectors (3.5 TiB)

Number Start (sector) End (sector) Size Code Name

1 2048 20973567 10.0 GiB 8300 Linux filesystem

2 20973568 41945087 10.0 GiB 8300 Linux filesystem

3 41945088 62916607 10.0 GiB 8300 Linux filesystem

Command (? for help): n

Partition number (4-128, default 4):

First sector (34-7501476494, default = 62916608) or {+-}size{KMGTP}:

Last sector (62916608-7501476494, default = 7501476494) or {+-}size{KMGTP}: +10g

Current type is 'Linux filesystem'

Hex code or GUID (L to show codes, Enter = 8300):

Changed type of partition to 'Linux filesystem'

Command (? for help): n

Partition number (5-128, default 5):

First sector (34-7501476494, default = 83888128) or {+-}size{KMGTP}:

Last sector (83888128-7501476494, default = 7501476494) or {+-}size{KMGTP}: +10g

Current type is 'Linux filesystem'

Hex code or GUID (L to show codes, Enter = 8300):

Changed type of partition to 'Linux filesystem'

Command (? for help): w

Final checks complete. About to write GPT data. THIS WILL OVERWRITE EXISTING

PARTITIONS!!

Do you want to proceed? (Y/N): y

OK; writing new GUID partition table (GPT) to /dev/nvme0n1.

Warning: The kernel is still using the old partition table.

The new table will be used at the next reboot or after you

run partprobe(8) or kpartx(8)

The operation has completed successfully.

$ sudo partprobe /dev/sdc

$ sudo partprobe /dev/nvme0n1 |

Array creation ^

$ sudo mdadm --create \

--verbose \

--assume-clean \

/dev/md8 \

--level=10 \

--raid-devices=2 \

--layout=f2 \

/dev/sdc4 /dev/nvme0n1p4

mdadm: chunk size defaults to 512K

mdadm: size set to 10476544K

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md8 started.

$ sudo mdadm --create \

--verbose \

--assume-clean \

/dev/md9 \

--level=10 \

--raid-devices=2 \

--layout=o2 \

/dev/sdc5 /dev/nvme0n1p5

mdadm: chunk size defaults to 512K

mdadm: size set to 10476544K

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md9 started.

$ cat /proc/mdstat

Personalities : [linear] [multipath] [raid0] [raid1] [raid10] [raid6] [raid5] [raid4]

md9 : active raid10 nvme0n1p5[1] sdc5[0]

10476544 blocks super 1.2 512K chunks 2 offset-copies [2/2] [UU]

md8 : active raid10 nvme0n1p4[1] sdc4[0]

10476544 blocks super 1.2 512K chunks 2 far-copies [2/2] [UU]

md7 : active raid10 sde3[1] sdd3[0]

10476544 blocks super 1.2 2 near-copies [2/2] [UU]

md6 : active raid1 sde2[1] sdd2[0]

10476544 blocks super 1.2 [2/2] [UU]

md5 : active raid10 nvme0n1p3[1] sdc3[0]

10476544 blocks super 1.2 2 near-copies [2/2] [UU]

md4 : active raid1 nvme0n1p2[1] sdc2[0]

10476544 blocks super 1.2 [2/2] [UU]

md2 : active (auto-read-only) raid10 sda3[0] sdb3[1]

974848 blocks super 1.2 2 near-copies [2/2] [UU]

md0 : active raid1 sdb1[1] sda1[0]

497664 blocks super 1.2 [2/2] [UU]

md1 : active raid10 sda2[0] sdb2[1]

1950720 blocks super 1.2 2 near-copies [2/2] [UU]

md3 : active raid10 sda5[0] sdb5[1]

12025856 blocks super 1.2 2 near-copies [2/2] [UU]

unused devices: <none> |

Raw fio Output ^

Only output from the tests on the arrays with non-default output is shown here; the rest is in the previous article.

This is a lot of output and it’s the last thing in this article, so if you’re not interested in it you should stop reading now.

fast-raid10-f2_seqread: (g=0): rw=read, bs=(R) 4096B-4096B, (W) 4096B-4096B[175/9131]

B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-f2_seqread: Laying out IO file (1 file / 8192MiB)

fast-raid10-f2_seqread: (groupid=0, jobs=4): err= 0: pid=5287: Sun Jun 2 00:18:35 20

19

read: IOPS=244k, BW=954MiB/s (1001MB/s)(32.0GiB/34340msec)

bw ( KiB/s): min=968176, max=984312, per=100.00%, avg=977239.00, stdev=740.55, sa

mples=272

iops : min=242044, max=246078, avg=244309.69, stdev=185.14, samples=272

cpu : usr=6.98%, sys=33.05%, ctx=738159, majf=0, minf=167

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=8388608,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

READ: bw=954MiB/s (1001MB/s), 954MiB/s-954MiB/s (1001MB/s-1001MB/s), io=32.0GiB (3

4.4GB), run=34340-34340msec

Disk stats (read/write):

md8: ios=8379702/75, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=276780

2/15, aggrmerge=1426480/64, aggrticks=618421/9, aggrin_queue=604770, aggrutil=99.93%

nvme0n1: ios=4194304/15, merge=0/64, ticks=154683/0, in_queue=160368, util=99.93%

sdc: ios=1341300/16, merge=2852961/64, ticks=1082160/19, in_queue=1049172, util=99.

81%

fast-raid10-o2_seqread: (g=0): rw=read, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 4096

B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-o2_seqread: Laying out IO file (1 file / 8192MiB)

fast-raid10-o2_seqread: (groupid=0, jobs=4): err= 0: pid=5312: Sun Jun 2 00:19:31 20

19

read: IOPS=244k, BW=954MiB/s (1000MB/s)(32.0GiB/34358msec)

bw ( KiB/s): min=969458, max=981640, per=100.00%, avg=976601.62, stdev=607.72, sa

mples=272

iops : min=242364, max=245410, avg=244150.46, stdev=151.95, samples=272

cpu : usr=5.91%, sys=33.95%, ctx=732590, majf=0, minf=162

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=8388608,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

READ: bw=954MiB/s (1000MB/s), 954MiB/s-954MiB/s (1000MB/s-1000MB/s), io=32.0GiB (3

4.4GB), run=34358-34358msec

Disk stats (read/write):

md9: ios=8385126/75, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=276691

0/15, aggrmerge=1427340/64, aggrticks=618657/10, aggrin_queue=606194, aggrutil=99.99%

nvme0n1: ios=4194304/15, merge=0/64, ticks=157297/1, in_queue=163632, util=99.94%

sdc: ios=1339516/16, merge=2854681/64, ticks=1080017/19, in_queue=1048756, util=99.

99%

fast-raid10-f2_seqwrite: (g=0): rw=write, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 40

96B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-f2_seqwrite: (groupid=0, jobs=4): err= 0: pid=5337: Sun Jun 2 00:21:13 2

019

write: IOPS=82.2k, BW=321MiB/s (337MB/s)(32.0GiB/101992msec); 0 zone resets

bw ( KiB/s): min=315288, max=336184, per=99.99%, avg=328946.06, stdev=670.42, sam

ples=812

iops : min=78822, max=84046, avg=82236.45, stdev=167.60, samples=812

cpu : usr=2.15%, sys=34.88%, ctx=973042, majf=0, minf=38

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=0,8388608,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

WRITE: bw=321MiB/s (337MB/s), 321MiB/s-321MiB/s (337MB/s-337MB/s), io=32.0GiB (34.4

GB), run=101992-101992msec

Disk stats (read/write): [92/9131]

md8: ios=0/8380840, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=0/83882

06, aggrmerge=0/461, aggrticks=0/704880, aggrin_queue=724510, aggrutil=100.00%

nvme0n1: ios=0/8388649, merge=0/20, ticks=0/123227, in_queue=202792, util=100.00%

sdc: ios=0/8387763, merge=0/902, ticks=0/1286533, in_queue=1246228, util=98.78%

fast-raid10-o2_seqwrite: (g=0): rw=write, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T) 40

96B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-o2_seqwrite: (groupid=0, jobs=4): err= 0: pid=5366: Sun Jun 2 00:22:56 2

019

write: IOPS=82.4k, BW=322MiB/s (337MB/s)(32.0GiB/101820msec); 0 zone resets

bw ( KiB/s): min=316248, max=420304, per=100.00%, avg=331319.30, stdev=3808.39, s

amples=807

iops : min=79062, max=105076, avg=82829.76, stdev=952.10, samples=807

cpu : usr=2.19%, sys=34.22%, ctx=975496, majf=0, minf=37

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=0,8388608,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

WRITE: bw=322MiB/s (337MB/s), 322MiB/s-322MiB/s (337MB/s-337MB/s), io=32.0GiB (34.4

GB), run=101820-101820msec

Disk stats (read/write):

md9: ios=0/8374085, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=0/83882

42, aggrmerge=0/442, aggrticks=0/704724, aggrin_queue=728030, aggrutil=100.00%

nvme0n1: ios=0/8388657, merge=0/21, ticks=0/124463, in_queue=211316, util=100.00%

sdc: ios=0/8387828, merge=0/864, ticks=0/1284985, in_queue=1244744, util=98.83%

fast-raid10-f2_randread: (g=0): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T)

4096B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-f2_randread: (groupid=0, jobs=4): err= 0: pid=5412: Sun Jun 2 00:23:39 2

019

read: IOPS=196k, BW=767MiB/s (804MB/s)(32.0GiB/42725msec)

bw ( KiB/s): min=753863, max=816072, per=99.95%, avg=784998.94, stdev=3053.58, sa

mples=340

iops : min=188465, max=204018, avg=196249.72, stdev=763.40, samples=340

cpu : usr=4.97%, sys=25.34%, ctx=884047, majf=0, minf=161

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=8388608,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

READ: bw=767MiB/s (804MB/s), 767MiB/s-767MiB/s (804MB/s-804MB/s), io=32.0GiB (34.4

GB), run=42725-42725msec

Disk stats (read/write):

md8: ios=8371889/4, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=4191336

/15, aggrmerge=2963/1, aggrticks=1470317/6, aggrin_queue=854184, aggrutil=100.00%

nvme0n1: ios=4194304/15, merge=0/1, ticks=317755/0, in_queue=338708, util=100.00%

sdc: ios=4188368/16, merge=5926/2, ticks=2622880/12, in_queue=1369660, util=99.90%

fast-raid10-o2_randread: (g=0): rw=randread, bs=(R) 4096B-4096B, (W) 4096B-4096B, (T)

4096B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-o2_randread: (groupid=0, jobs=4): err= 0: pid=5437: Sun Jun 2 00:24:22 2

019

read: IOPS=196k, BW=767MiB/s (804MB/s)(32.0GiB/42725msec)

bw ( KiB/s): min=741672, max=832016, per=99.96%, avg=785051.96, stdev=4207.46, sa

mples=340

iops : min=185418, max=208004, avg=196262.98, stdev=1051.86, samples=340

cpu : usr=4.51%, sys=25.36%, ctx=886783, majf=0, minf=164

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=8388608,0,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

READ: bw=767MiB/s (804MB/s), 767MiB/s-767MiB/s (804MB/s-804MB/s), io=32.0GiB (34.4

GB), run=42725-42725msec

Disk stats (read/write): [8/9131]

md9: ios=8371755/4, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=4191564

/7, aggrmerge=2733/1, aggrticks=1469572/3, aggrin_queue=853154, aggrutil=100.00%

nvme0n1: ios=4194304/7, merge=0/1, ticks=317525/0, in_queue=336088, util=100.00%

sdc: ios=4188825/8, merge=5466/1, ticks=2621620/6, in_queue=1370220, util=99.87%

fast-raid10-f2_randwrite: (g=0): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (

T) 4096B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-f2_randwrite: (groupid=0, jobs=4): err= 0: pid=5462: Sun Jun 2 00:26:04

2019

write: IOPS=82.3k, BW=321MiB/s (337MB/s)(32.0GiB/101961msec); 0 zone resets

bw ( KiB/s): min=318832, max=396249, per=100.00%, avg=329384.74, stdev=1762.35, s

amples=810

iops : min=79708, max=99061, avg=82346.02, stdev=440.57, samples=810

cpu : usr=2.42%, sys=34.38%, ctx=975633, majf=0, minf=39

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=0,8388608,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

WRITE: bw=321MiB/s (337MB/s), 321MiB/s-321MiB/s (337MB/s-337MB/s), io=32.0GiB (34.4

GB), run=101961-101961msec

Disk stats (read/write):

md8: ios=0/8383420, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=0/83886

62, aggrmerge=0/17, aggrticks=0/704633, aggrin_queue=735234, aggrutil=100.00%

nvme0n1: ios=0/8388655, merge=0/14, ticks=0/123197, in_queue=208804, util=100.00%

sdc: ios=0/8388669, merge=0/20, ticks=0/1286069, in_queue=1261664, util=98.75%

fast-raid10-o2_randwrite: (g=0): rw=randwrite, bs=(R) 4096B-4096B, (W) 4096B-4096B, (

T) 4096B-4096B, ioengine=libaio, iodepth=32

...

fio-3.13-42-g8066f

Starting 4 processes

fast-raid10-o2_randwrite: (groupid=0, jobs=4): err= 0: pid=5491: Sun Jun 2 00:27:47

2019

write: IOPS=82.3k, BW=322MiB/s (337MB/s)(32.0GiB/101880msec); 0 zone resets

bw ( KiB/s): min=315369, max=418520, per=100.00%, avg=330793.95, stdev=3466.64, s

amples=808

iops : min=78842, max=104630, avg=82698.46, stdev=866.66, samples=808

cpu : usr=2.21%, sys=34.38%, ctx=972875, majf=0, minf=39

IO depths : 1=0.1%, 2=0.1%, 4=0.1%, 8=0.1%, 16=0.1%, 32=100.0%, >=64=0.0%

submit : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.0%, 64=0.0%, >=64=0.0%

complete : 0=0.0%, 4=100.0%, 8=0.0%, 16=0.0%, 32=0.1%, 64=0.0%, >=64=0.0%

issued rwts: total=0,8388608,0,0 short=0,0,0,0 dropped=0,0,0,0

latency : target=0, window=0, percentile=100.00%, depth=32

Run status group 0 (all jobs):

WRITE: bw=322MiB/s (337MB/s), 322MiB/s-322MiB/s (337MB/s-337MB/s), io=32.0GiB (34.4

GB), run=101880-101880msec

Disk stats (read/write):

md9: ios=0/8368626, merge=0/0, ticks=0/0, in_queue=0, util=0.00%, aggrios=0/83886

67, aggrmerge=0/20, aggrticks=0/705086, aggrin_queue=732522, aggrutil=100.00%

nvme0n1: ios=0/8388658, merge=0/19, ticks=0/123370, in_queue=209792, util=100.00%

sdc: ios=0/8388677, merge=0/21, ticks=0/1286802, in_queue=1255252, util=98.95% |