Including remote data in a MediaWiki article

A few months ago I needed to include some data — that was generated and held remotely — into a MediaWiki article.

Here's the solution I chose which enabled me to generate some tables populated with data that only exists in some remote YAML files:

I did actually do all this back in early April, but as I couldn't read my own blog site at the time I had to set up a new blog before I could write about it! 😀

Background

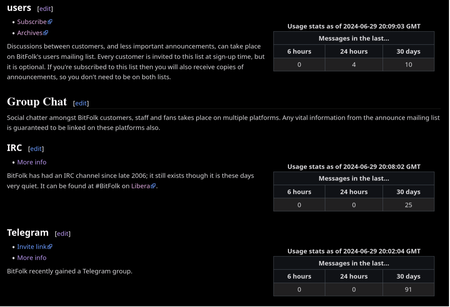

All the way back in March 2024 I'd decided that BitFolk probably should have some alternative chat venue to its IRC channel, which had been largely silent for quite some time. So, I'd opened a Telegram group and spruced up the Community article on BitFolk's wiki.

When writing about the new thing in the article I got to thinking how I feel when I see a project with a bunch of different contact methods listed.

I'm usually glad to see that a project has ways to contact them that I don't

consider awful, but if all the ones that I consider non-awful are actually

deserted, barren and disused then I'd like to be able to decide whether I

would actually want to hold my nose and go to a Discord some service I

ordinarily would dislike.

So, it's not just that these things exist — easy to just list off — but I decided I would like to also include some information about how active these things are (or not).

The problem

BitFolk's wiki is a MediaWiki site, so including any sort of dynamic content that isn't already implemented in the software would require code changes or an extension.

The one solution that doesn't involve developing something or using an

existing extension would be to put a HTML <iframe> in a template that's set

to allow raw HTML. <iframe>s aren't normally allowed in general articles due

to the havoc they could cause with a population of untrusted authors, but

putting them in templates would be okay since the content they would include

could be locked down that way.

The appearance of such a thing though is just not very nice without a lot of styling work. That's basically a web site inside a web site. I had the hunch that there would be existing extensions for including structured remote data. And there is!

External_Data extension

The extension I settled on is called External_Data.

Allows for using and displaying values retrieved from various sources: external URLs and SOAP services, local wiki pages and local files (in CSV, JSON, XML and other formats), database tables, LDAP servers and local programs output.

Just what I was looking for!

While this extension can just include plain text, there are other, simpler extebnsions I could have used if I just wanted to do that. You see, each of the sets of activity stats will have to be generated by a program specific to each service; counting mailing list posts is not like counting IRC messages, and so on.

I wanted to write programs that would store this information in a structured

format like YAML and then External_Data would be used to turn each of those

remote YAML files into a table.

Example YAML data

I structured the output of my programs like this:

---

bitfolk:

messages_last_30day: 91

messages_last_6hour: 0

messages_last_day: 0

stats_at:

Markup in the wiki article

In the wiki article that is formatted like this:

{{#get_web_data:url=https://ruminant.bitfolk.com/social-stats/tg.yaml

|format=yaml

|data=bftg_stats_at=stats_at,bftg_last_6hour=messages_last_6hour,bftg_last_day=messages_last_day,bftg_last_30day=messages_last_30day

}}

{| class="wikitable" style="float:right; width:25em; margin:1em"

|+ Usage stats as of {{#external_value:bftg_stats_at}} GMT

|-

!colspan="3" | Messages in the last…

|-

! 6 hours || 24 hours || 30 days

|- style="text-align:center"

| {{#external_value:bftg_last_6hour}}

| {{#external_value:bftg_last_day}}

| {{#external_value:bftg_last_30day}}

|}

How it works

- Data is requested from a remote URL (https://ruminant.bitfolk.com/social-stats/tg.yaml).

- It's parsed as YAML.

- Variables from the YAML are stored in variables in the article, e.g.

bftg_stats_atis set to the value ofstats_atfrom the YAML. - A table in wiki syntax is made and the data inserted in to it with

directives like

{{#external_value:bftg_stats_at}}.

This could obviously be made cleaner by putting all the wiki markup in a template and just calling that with the variables.

Wrinkle: MediaWiki's caching

MediaWiki caches quite aggressively, which makes a lot of sense: it's expensive for some PHP to request wiki markup out of a database and convert it into HTML every time when it almost certainly hasn't changed since the last time someone looked at it. But that frustrates what I'm trying to do here. The remote data does update and MediaWiki doesn't know about that!

In theory it looks like it is possible to

adjust cache times per article

(or even per remote URI) but I didn't have much success getting that to work.

It is possible to force an article's cache to be purged with just a POST

request though, so I solved the problem by having each of my activity

summarising programs issue such a request when their job is done. This will do

it:

curl -s -X POST 'https://tools.bitfolk.com/wiki/Community?action=purge'

They only run once an hour anyway, so it's not a big deal.

Concerns?

Isn't it dangerous to allow article authors to include arbitrary remote data?

Yes! The main wiki configuration can have a section added which sets an allowlist of domains or even URI prefixes for what is allowed to be included.

What if the remote data becomes unavailable?

The extension has settings for how stale data can be before it's rejected. In this case it's a trivial use so it doesn't really matter, of course.

Found a typo? Feel free to just submit a pull request on GitHub to fix it. 😀